Designing Intelligence in Interaction

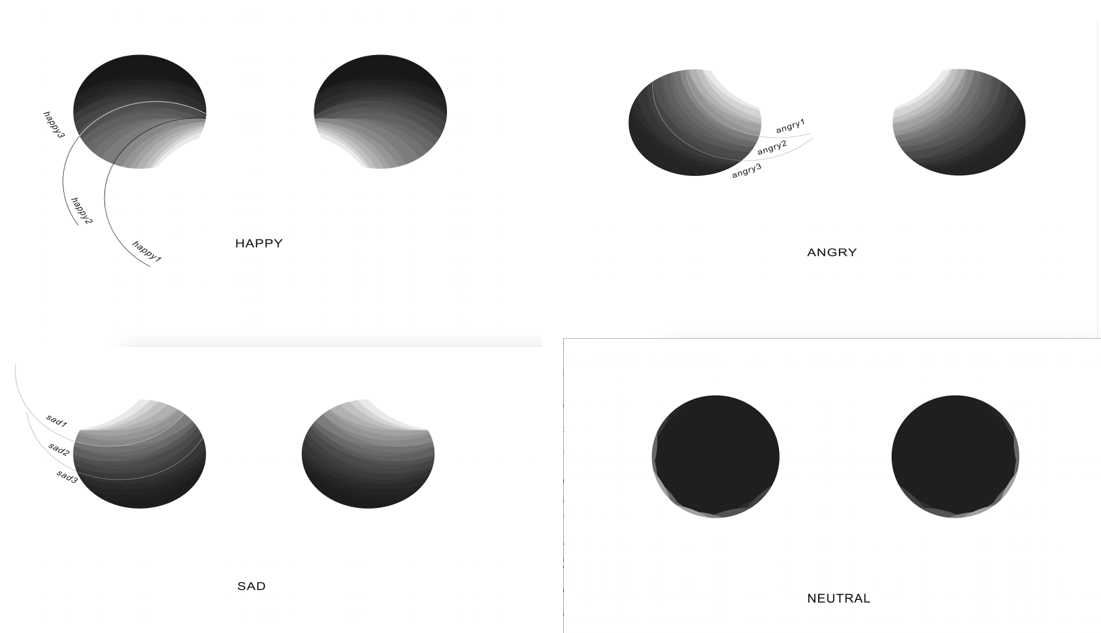

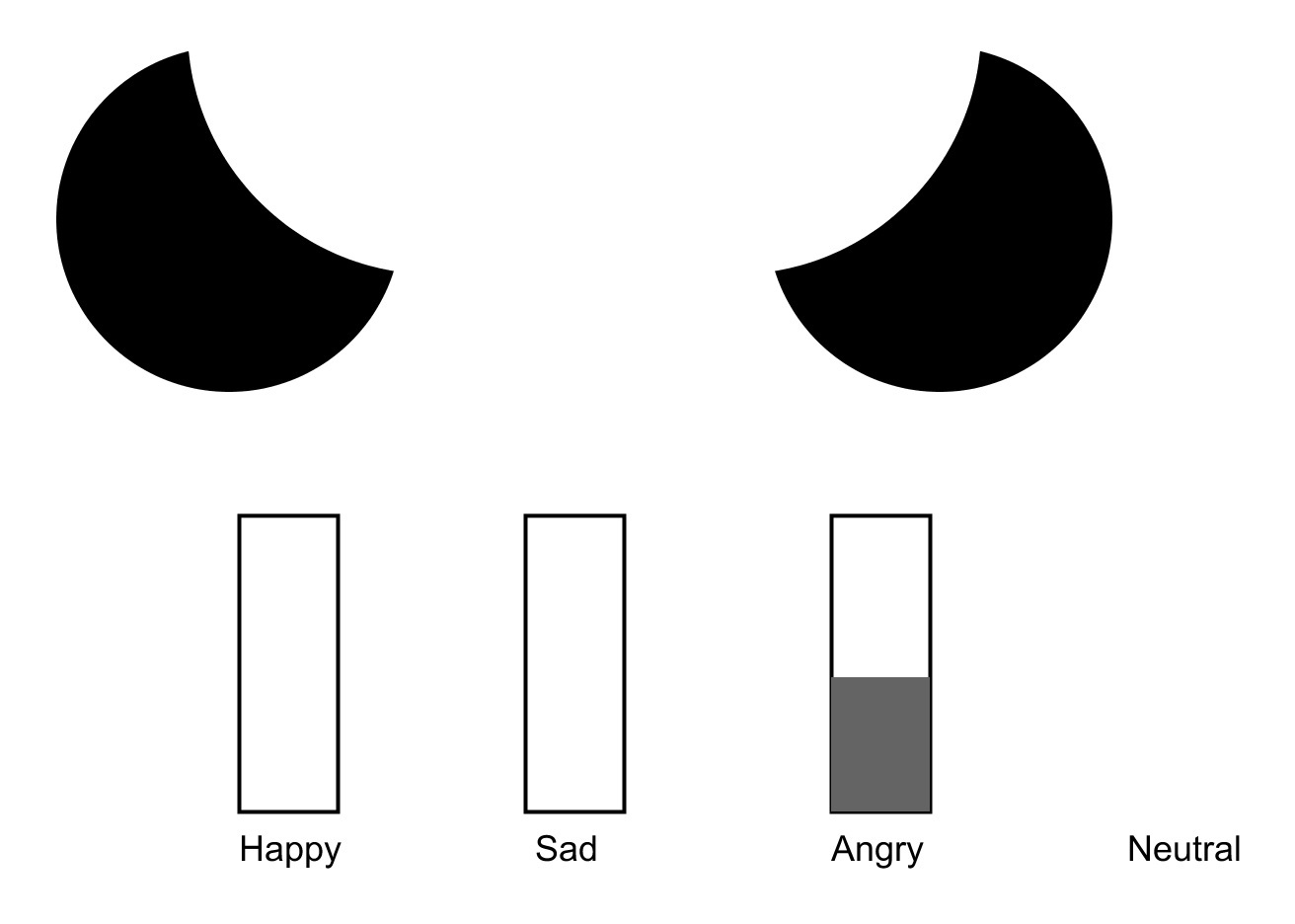

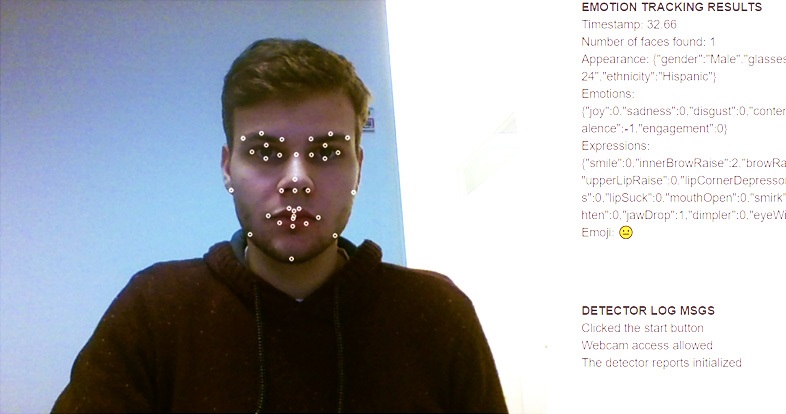

Robots are increasingly becoming part of our daily lives which makes human-robot interaction an important topic to design for. In this course, we explored the technologic possibilities of a domestic drone building a relationship with a human using emotion recognition. In order to determine emotion from the robot’s perspective, we created a neural network. Using facial data from a webcam, extracted using an existing SDK, these emotions were determined. Based on examples of different emotions, the neural network was able to live distinguish between negative and positive emotion. The user would see a screen with the drones’ eyes responding differently to their emotion based on their relationship level. Using our research, we are one step closer to drones becoming part of our daily lives. The published paper can be found in the menu on the left.